Augmenting Justice While Preserving Human Judgment

- aslawforai

- 24 apr 2025

- Tempo di lettura: 3 min

By Antonina Maria De Francesco

The integration of artificial intelligence into legal systems presents transformative opportunities for efficiency and accessibility, yet demands rigorous safeguards to prevent erosion of procedural fairness and judicial accountability.

The adoption of artificial intelligence in courtrooms has accelerated globally, driven by mounting caseloads and aspirations for data-driven objectivity. Tools like predictive sentencing algorithms and AI legal research assistants are reshaping litigation strategies and judicial workflows. However, as jurisdictions experiment with algorithmic decision-making -from Estonia’s proposed “robot judges” for small claims [^4] to U.S. courts using COMPAS for bail recommendations [^3]- critical questions emerge about how to balance technological innovation with the irreplaceable human elements of justice.

Current Applications: Efficiency Gains and Emerging ConcernsAI’s most widely adopted courtroom applications focus on augmenting human capabilities rather than replacing judges. Legal research platforms like Harvey AI leverage natural language processing to analyse case law across jurisdictions, enabling lawyers to identify precedents 83% faster than manual methods [^1]. Founded by Winston Weinberg and Gabriel Pererya, the platform’s $715 million valuation reflects its adoption by elite firms like Allen & Overy, where 3,500 lawyers tested it on 40,000 queries [^1]. Similarly, Lex Machina’s Outcome AnalyticsTM uses proprietary algorithms to predict judicial behaviour and case outcomes through historical verdict patterns [^2]. Proponents argue these systems reduce costly human errors, a vital benefit given that England’s Crown Courts faced 66,547 pending cases in late 2023, including 1,063 defendants remanded for over two years [^5].Nevertheless, risk-assessment algorithms in criminal justice reveal deeper complexities. While COMPAS and similar tools claim to objectively evaluate recidivism risks, a 2016 ProPublica study demonstrated they falsely flagged Black defendants as high-risk at twice the rate of white defendants [^3]. This paradox underscores a fundamental tension: AI systems optimized for efficiency often inherit the systemic inequities they were meant to resolve.

Ethical Imperatives: Bias, Transparency, and AccountabilityThe technical opacity of AI systems poses acute challenges to legal due process. Machine learning models operating as “black boxes” deny defendants meaningful explanation of risk scores influencing bail or sentencing decisions, a violation of the right to confront adverse evidence [^3]. This opacity intertwines with commercial secrecy, as proprietary algorithms like Lex Machina’s Outcome AnalyticsTM shield their decision logic behind corporate firewalls [^2]. UNESCO warns that such systems threaten foundational rights when deployed without auditing protocols to detect discriminatory patterns [^6].Moreover, AI’s reliance on historical data creates an inherent conservatism ill-suited to evolving legal norms. As demonstrated in international arbitration contexts, algorithms trained on past rulings struggle to adapt to new human rights precedents or policy shifts, potentially calcifying outdated jurisprudence. Estonia’s AI judge pilot for small claims under €7,000 exemplifies this tension: while designed to reduce case backlogs, its algorithm risks institutionalizing biases present in historical rulings unless rigorously monitored [^4].

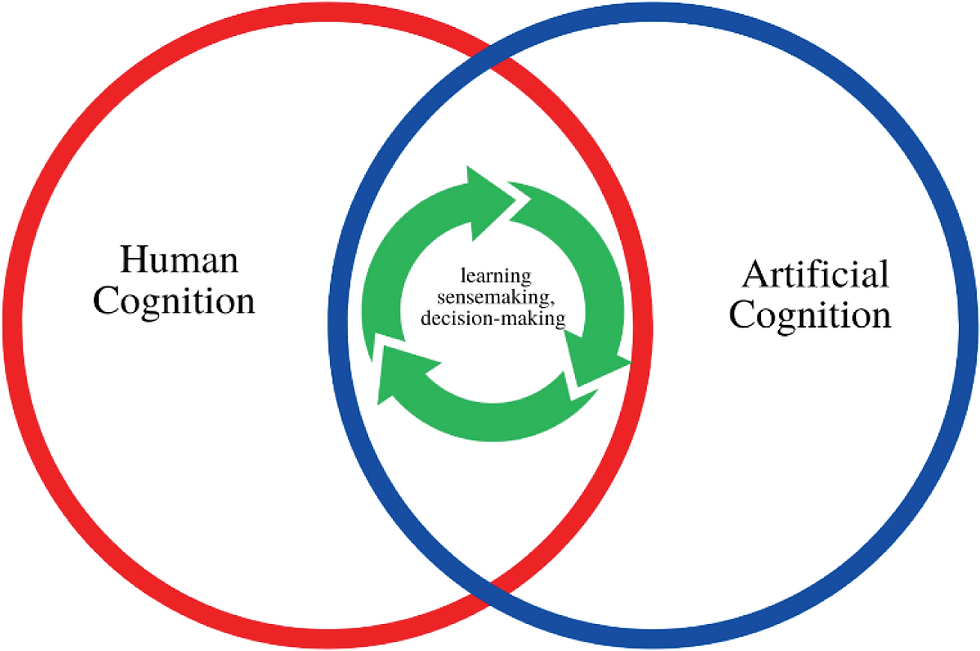

Safeguarding Justice: A Framework for Human-AI CollaborationTo harness AI’s benefits while mitigating risks, these safeguards appear essential:1. Transparency Mandates: Courts should require disclosure of algorithmic training data, accuracy rates across demographics, and decision logic for any AI tool influencing judicial outcomes. The European Union’s proposed AI Act offers a model, classifying judicial AI as “high-risk” and subject to rigorous documentation requirements [^6].2. Human Oversight Protocols: Final legal determinations must remain with judges empowered to override algorithmic recommendations. Estonia’s experimental robot judges, while autonomous in adjudicating minor financial disputes, maintain human appeal avenues, a crucial check against computational errors [^4].3. Bias Audits: Independent third parties should regularly evaluate AI tools for discriminatory impacts, as exemplified by the ACLU’s algorithmic accountability project assessing sentencing disparities [^3].

ConclusionAI’s courtroom integration need not culminate in a zero-sum contest between humans and machines. When deployed as assistive tools subject to robust oversight, AI can alleviate administrative burdens, enhance research quality, and surface latent biases in judicial decision- making. However, the automation of justice must never become an end in itself. By anchoring AI systems to transparency standards, equitable design principles, and enduring human rights frameworks, legal professionals can cultivate a future where technology strengthens rather than subverts the rule of law.

Sources[^1]:

“Harvey AI: The Legal AI Tool to Watch Out For,” OnTheMap Blog, April 17, 2024.[^2]: “Lex Machina: Unmatched Legal Insights for Winning Your Case,” Dynamic Business, June 30, 2024.

[^3]: Columbia Human Rights Law Review, “Reprogramming Fairness: Affirmative Action in Algorithmic Criminal Sentencing,” April 5, 2016.

[^4]: “Estonia to Empower AI-Based Judge in Small Claims Court,” 150sec, April 10, 2019.

[^5]: Law Society of England and Wales, “Record Numbers Waiting Years for Trial,” December 14, 2023.

[^6]: UNESCO, AI and the Rule of Law, 2024.

Commenti